What happens when AI policy meets implementation — and when what companies say about safety, governance, and responsibility meets what they actually do. Analysis from an award-winning journalist and product strategist who has worked across media, government, and technology.

Two Supreme Courts Finalized AI Rules for Judges. The US Hasn’t.

Philippines and Paraguay finalized binding AI rules for their judiciaries in early 2026. The US federal judiciary still operates on non-binding guidance.

AI Chatbots Are Becoming Mandated Reporters. Without the Manual.

Two governments want AI chatbots to report users. Garbarino and China’s new rule expose a mandated-reporting role built without accountability standards.

AI Companies Claim Free Speech to Block Anti-Discrimination Laws

xAI sued Colorado over its AI anti-discrimination law, arguing the rules violate the First Amendment. OpenAI backed an Illinois bill limiting AI liability.

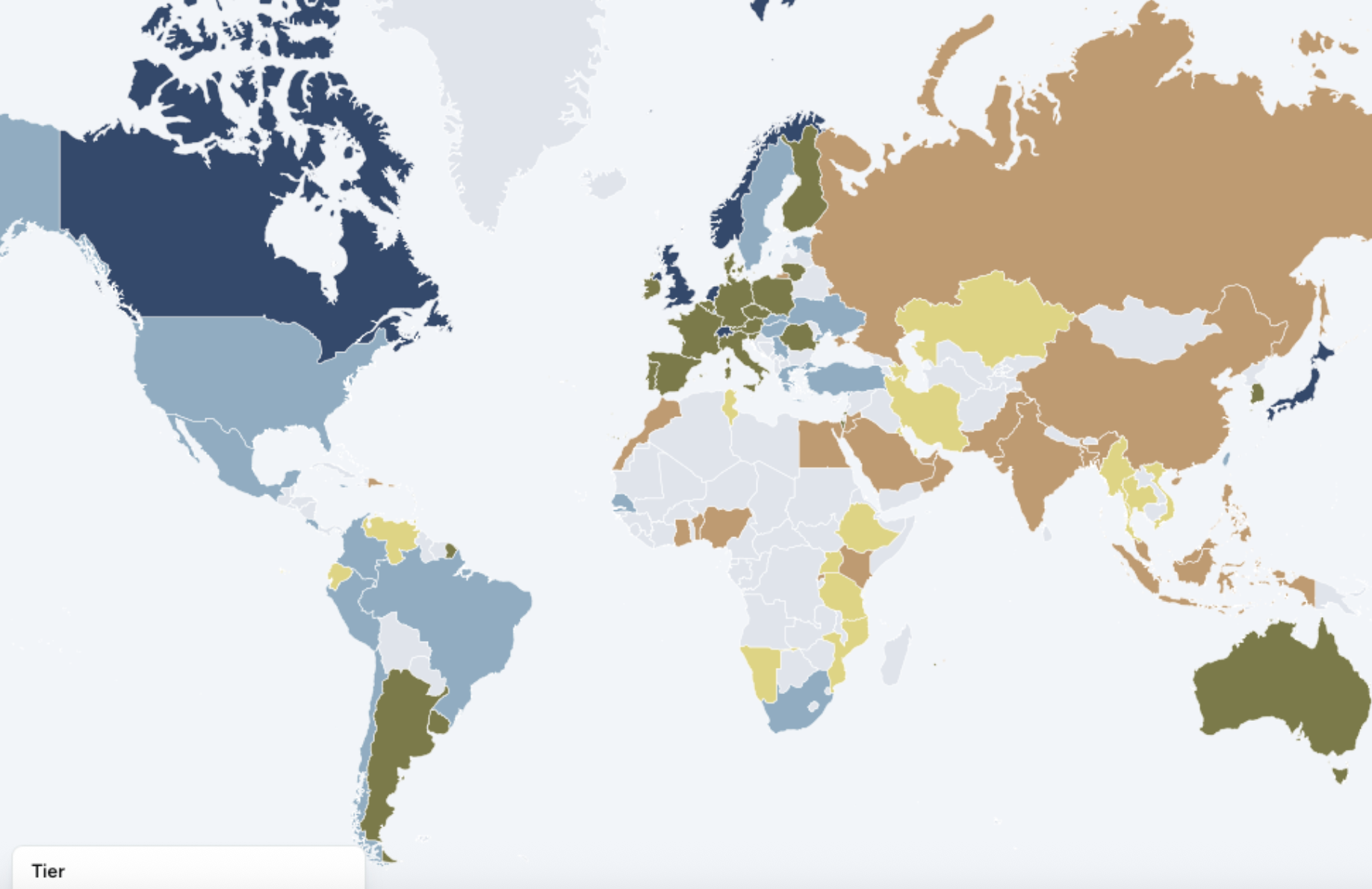

90 Countries Are Building AI Governance. The US Isn’t One of Them.

The CAIDP Index 2026 ranks 90 countries on AI governance. The US missed the top tier. Stanford shows Americans trust their government least on AI.

The FTC Is Using a 1914 Law to Police AI. It’s Working.

The FTC and SEC are punishing misleading AI product claims with laws written decades before AI existed. The harder cases are still coming.

AI Safety Has a Business Model Problem

Anthropic held its safety red lines and got blacklisted. OpenAI signed a Pentagon deal hours later. The market is punishing the company that said no.

OpenAI Cleared Its CEO. It Never Wrote Down Why.

Farrow and Marantz reveal OpenAI’s WilmerHale probe produced no written report. Uber published 47 recommendations. OpenAI published 800 words.

California and the Pentagon Have Opposite AI Safety Rules

California’s new AI order requires vendors to maintain safety standards. Federal procurement rules would override them. Anthropic is the test case.

Corporate AI Governance Protects Companies, Not People

A UNESCO and Thomson Reuters Foundation report on 3,000 companies finds 18% assess AI data risks but only 7% assess human rights impacts. The gap reveals whose interests governance serves.

Ireland Is About to Set the EU’s AI Agenda. It Also Hosts the Industry.

Ireland takes the EU Council presidency in July while hosting Anthropic, OpenAI, and Workday. Its dual role raises real questions about AI enforcement.

OpenAI Is Paying Workers to Map Their Own Replacement

OpenAI’s Project Stagecraft pays freelancers to map their own jobs for AI training. Oracle, Block, and Meta reveal a broader labor pattern.

Vietnam Has an AI Law. Its AI Reality Is More Uneven.

Vietnam’s new AI law is Southeast Asia’s first major AI framework, raising questions about digital sovereignty, adoption, and who AI governance serves.

The White House AI Framework Trusts Oversight That Doesn’t Exist

The White House AI framework would preempt state laws and rely on industry standards. A new NIST report says those standards haven’t been built yet.